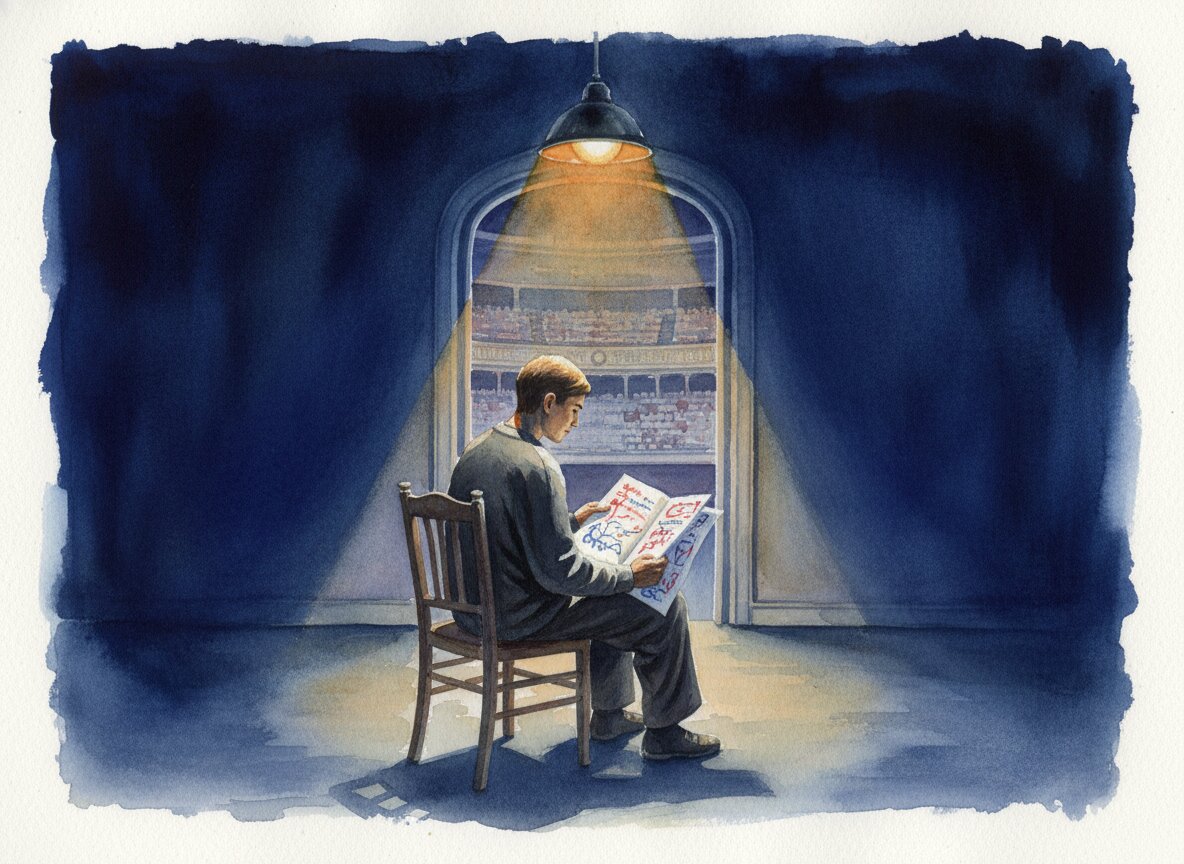

The Dress Rehearsal

Anthropic buried their Opus 4.7 strategy in a single sentence. The model they're not shipping explains the one they are.

The Brief

Anthropic deliberately trained Opus 4.7 to be less capable than Claude Mythos Preview, a model whose cybersecurity capabilities are too dangerous for public release. The disciplines 4.7 demands are vocabulary for steering whatever comes next.

- What is Claude Mythos Preview?

- Claude Mythos Preview shipped April 7, 2026, to more than forty partner organizations through a consortium called Project Glasswing. Anthropic has no plans for broader release. The model autonomously discovers and exploits zero-day vulnerabilities across every major operating system and browser, including a 27-year-old bug in OpenBSD, an operating system built specifically to resist attack.

- Why did Anthropic make Opus 4.7 less capable than Mythos?

- Anthropic admitted in a single sentence in their announcement that they experimented with efforts to differentially reduce Opus 4.7's cybersecurity capabilities relative to Mythos Preview. The strategy is to rehearse safety measures on a less dangerous model first, then apply those lessons before any Mythos-class system reaches the public.

- What cybersecurity capabilities does Mythos Preview have?

- Opus 4.6 produced two working Firefox exploits in several hundred attempts. Mythos produced 181. It found zero-day vulnerabilities that human researchers and automated tools missed for decades, including a 16-year-old flaw in FFmpeg's video codec that had been scanned five million times. Engineers without security training obtained working exploits by requesting them overnight.

- What is Project Glasswing?

- Project Glasswing is Anthropic's consortium of more than forty organizations, including Apple, Amazon, Microsoft, Google, Cisco, Broadcom, and the Linux Foundation. Named after a butterfly that hides in plain sight with transparent wings, the project gives partners access to Mythos Preview so they can find and patch critical software vulnerabilities before similar capabilities become broadly available.

- How should developers prepare for more literal AI instruction following?

- Replace conversational directives with numbered checkpoints. Set effort levels before tasks begin. Build validators that start clean instead of inheriting assumptions. These disciplines aren't specific to Opus 4.7. They're how you steer any model that reads instructions literally rather than inferring intent.

I published The Flowchart on a Sunday evening, frustrated with Opus 4.7 and not particularly diplomatic about it. By Tuesday morning I was still re-reading Anthropic's announcement. Not the whole thing. One sentence. Tucked into a paragraph like a note someone slides under a door.

Opus 4.7 is the first such model: its cyber capabilities are not as advanced as those of Mythos Preview (indeed, during its training we experimented with efforts to differentially reduce these capabilities).1

That sentence held a confession. Anthropic trained their newest public model to be less capable than Mythos Preview, which most of us will probably never use. They said it once, in passing, and moved on.

Capybara

Mythos has a codename. Capybara. The 140-pound South American rodent that lets birds perch on its head and iguanas stretch across its back. Anthropic picked the most tranquil animal they could find for the most dangerous system they've ever built.

It shipped on April 7 as Claude Mythos Preview, though "shipped" overstates it. More than forty organizations received access through a consortium called Project Glasswing, named after the butterfly that hides by being see-through. Which is also what decades-old software bugs have been doing. Apple, Amazon, Microsoft, Google, Cisco, Broadcom, the Linux Foundation.2 The companies that keep the plumbing of the internet running. Their assignment is to patch as much critical infrastructure as possible before anyone else gets near this thing.

Opus 4.6 cracked Firefox twice in several hundred tries. Mythos cracked it 181 times. They named the model after the world's most unbothered rodent.

Opus 4.6 cracked Firefox twice in several hundred tries. Mythos cracked it 181 times. They named the model after the world's most unbothered rodent.

Why the urgency? The numbers explain it faster than I can. Opus 4.6, pointed at Firefox vulnerabilities, produced working exploits twice in several hundred attempts. Mythos produced 181.3 It found a 27-year-old bug in OpenBSD, the operating system people choose specifically because it's built to resist attack. It uncovered a 16-year-old flaw in FFmpeg's video codec, in code that automated tools had scanned five million times.2 The bug entered the codebase in 2003. Became exploitable after a 2010 refactor. Sat there for sixteen years while every fuzzer on earth walked past it.3

Logan Graham, who leads Anthropic's red team, told the New York Times this was "the starting point for what we think will be an industry change point, or reckoning, with what needs to happen now."2 Reckoning. Not a word that survives a press review.

Engineers at Anthropic who had no formal security training started asking Mythos for remote code execution exploits before bed. By morning, they had working ones.3 None of it was trained for. It emerged.3 The gap between "helpful coding assistant" and "autonomous vulnerability hunter" turned out not to be a gap at all. Just a matter of scale.

The Rehearsal

Claude Enforcer, the project I maintain, converts the soft 4.6-era directives into explicit checkpoints that 4.7 will actually follow. Thirty skills. About ten minutes, each one reversible if it looked wrong.

The code side went fine. Execution, validation, structured workflows. 4.7 handled all of it. The conceptual side was a different story. Every time I needed the model to write, to think through an idea, to work with something fuzzy, I found myself switching back to 4.6. The content 4.7 produced felt overbuilt and hallucinogenic. Confident prose that wasn't saying anything real.

Not the actor's fault. The script was vague.

Not the actor's fault. The script was vague.

The work got better the moment I stopped writing instructions like a memo and started writing them like a contract. The model wasn't the bottleneck. 4.6 filled in the gaps I left. 4.7 just showed me where they were. There were a lot of gaps.

Opus 4.7 is the dress rehearsal. The disciplines it demands are vocabulary for whatever comes next. If I can't steer a model that refuses to guess, I definitely can't steer one that finds zero-days while I sleep.

References

Footnotes

-

Anthropic. (2026). "Introducing Claude Opus 4.7." Anthropic News ↩

-

Metz, C. and Roose, K. (2026). "Anthropic Claims Its New A.I. Model, Mythos, Is a Cybersecurity 'Reckoning'." The New York Times ↩ ↩2 ↩3

-

Anthropic. (2026). "Assessing Claude Mythos Preview's cybersecurity capabilities." red.anthropic.com ↩ ↩2 ↩3 ↩4

More to Explore

The Vacancy

136,000 tech workers laid off in 2026. Most contributed free work to open source in exchange for career capital. Everyone's covering the jobs. Nobody's asking what happens to the open source projects.

The Quiet

Eighteen days after Mythos shipped to forty-plus organizations, the loudest thing I've heard is my own article about it. That concerns me.

The Flowchart

If Opus 4.7 needs instructions that explicit, it needs its own programming language. Because it's no longer interested in inferring what we have to say.

Browse the Archive

Explore all articles by date, filter by category, or search for specific topics.

Open Field Journal