The Island

AI without the Internet doesn't get stale. It gets stranded. It learned everything from us, and we haven't stopped talking.

The Brief

Every major AI model has converged to roughly the same capability. The differentiator is not which model you use but whether it can reach the internet. A free open-source model with web access outperformed ChatGPT before OpenAI added browsing. Without live human content, AI doesn't just get stale. It gets stranded.

- Why are AI models no longer a competitive advantage?

- Stanford's 2025 AI Index shows models that once needed 540 billion parameters now need 3.8 billion for the same scores. The gap between top models collapsed to measurement error. Every major model converged to roughly the same capability, so the choice of model stopped mattering.

- What makes AI useful if the model doesn't matter?

- Internet access. A free open-source model with web search outperformed ChatGPT before OpenAI added browsing. The model didn't matter. What mattered was whether it could reach current research, code, news, and conversation. Live information is what makes AI useful.

- What is model collapse and why does it matter?

- Model collapse happens when AI trains on its own output instead of human-written content. The models degrade rather than improve. Researchers found that without fresh human writing, the training data narrows until the model loses capability. AI can't outgrow us. It still needs what we write.

- What should businesses focus on instead of choosing an AI model?

- Stop asking which model to use. Ask what it can connect to. A model plugged into current research, code, and conversation will outperform a premium model running in isolation. The differentiator was never the intelligence. It was the cable.

Two years ago I downloaded Llama 3.0 from HuggingFace and ran it on my own machine. Meta's open-source model. Free. At the time, ChatGPT was the product everyone was paying for. Billions in funding. Massive infrastructure. The most famous AI on the planet.

Llama beat it.

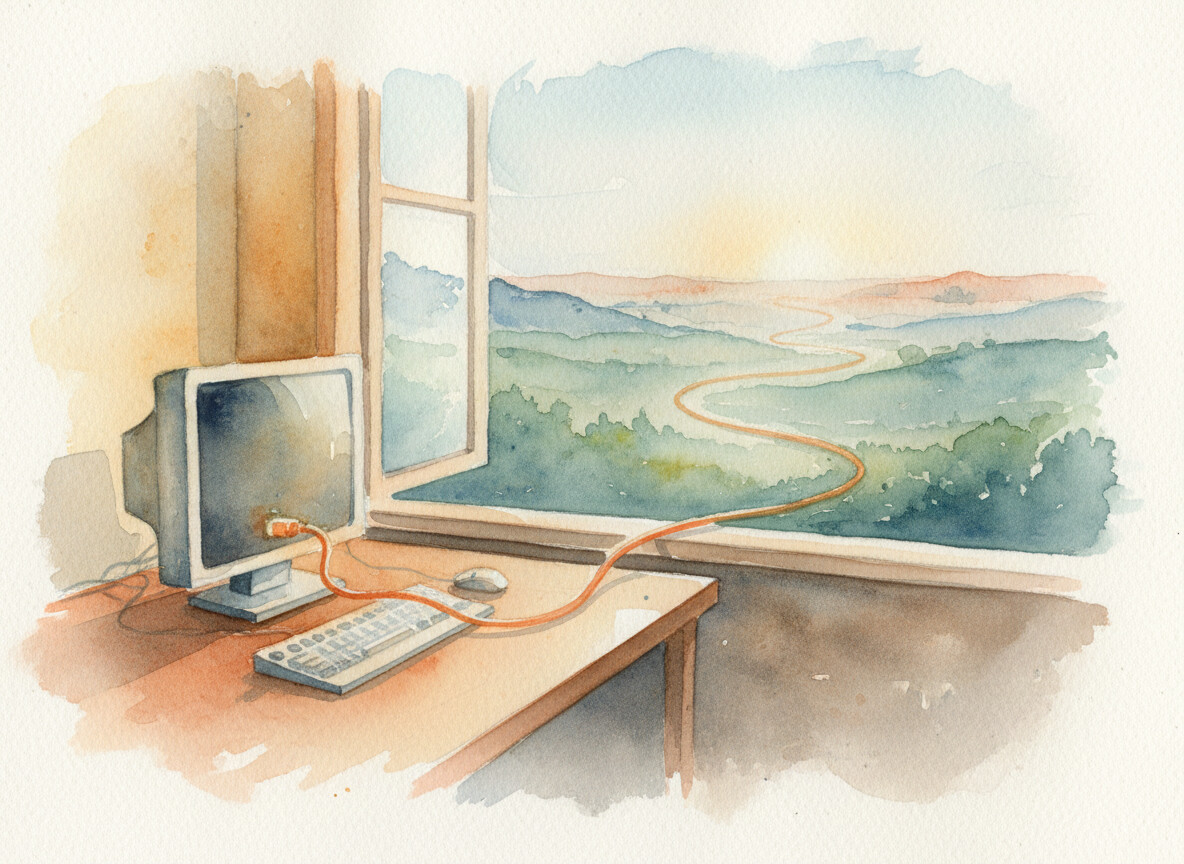

Not because it was smarter. Llama 3.0 was a smaller model by every measure. But my setup gave it something ChatGPT didn't have yet. Access to the internet.

That's it. A free model on a home computer, plugged into the web, outperformed the most funded AI product in history. The difference wasn't intelligence. It was a cable.

Billions in funding. One Ethernet cable.

Billions in funding. One Ethernet cable.

I figured OpenAI would catch up, and they did. When they finally added web search, they described the plugin as giving ChatGPT "eyes and ears."1 Think about that. The most talked-about AI product in the world shipped without the ability to look anything up. The fix was described, by the people who built it, as giving their creation the ability to see and hear.

Then Google added AI to search. Then Bing. And suddenly everyone had the same realization I'd had on my home machine a year earlier.

The model was never the point.

I started paying attention to the benchmarks after that. Stanford's 2025 AI Index put numbers to what I'd already seen.2 Models that once needed 540 billion parameters to hit a score now needed 3.8 billion to match it. A 142-fold reduction. The gap between every top model collapsed to what researchers called "measurement error territory." MIT Sloan asked the obvious question. "How can AI be the centerpiece of a sustained competitive advantage when everyone has it?"3

They can't. The models have plateaued. Every major model converged to roughly the same capability, and the race to be the smartest in the room ended in a tie.

So what actually makes the difference? The same thing that made Llama beat ChatGPT on my kitchen table. Connection to what human beings are actually producing. The arguments, the corrections, the code commits, the forum posts, the news.

Seven billion people still typing.

Seven billion people still typing.

Cut that connection and something worse than stale data happens. It turns out that when models train on their own synthetic output instead of human content, they degrade.4 They called it "model collapse." One researcher described it as "the computer-science version of inbreeding." We built this intelligence from everything humanity ever wrote, and it turns out it can't survive without us continuing to write.

If the internet went dark tomorrow, every AI on the planet would be stranded. Not limited. Not outdated. Useless.

John Donne wrote it four hundred years ago. No man is an island entire of itself. He meant that isolation doesn't just limit a person. It diminishes them. That line won't leave me alone. Turns out the same is true for the intelligence we built from a million human voices.

People keep asking me which model to use. Wrong question. They're all the same engine now. The only question that matters is what you connect it to.

No model is an island. The ones that work still have the cable attached.

References

Footnotes

-

CMSWire. (2023). "OpenAI Incorporates Web Search Into ChatGPT With Web Browser Plugin." CMSWire ↩

-

Stanford HAI. (2025). "AI Index 2025: State of AI in 10 Charts." Stanford HAI ↩

-

Wingate, D., Burns, B.L., & Barney, J.B. (2025). "Why AI Will Not Provide Sustainable Competitive Advantage." MIT Sloan Management Review ↩

-

The Week. (2024). "All-Powerful, Ever-Pervasive AI Is Running Out of Internet." The Week ↩

More to Explore

Will AI Stall Itself?

We call it the cloud. It is a windowless metal shed in the desert, and it is drinking the town's water. The thing most likely to slow AI down is not a smarter rival. It is the body AI runs on.

The Vacancy

136,000 tech workers laid off in 2026. Most contributed free work to open source in exchange for career capital. Everyone's covering the jobs. Nobody's asking what happens to the open source projects.

The Quiet

Eighteen days after Mythos shipped to forty-plus organizations, the loudest thing I've heard is my own article about it. That concerns me.

Browse the Archive

Explore all articles by date, filter by category, or search for specific topics.

Open Field Journal