The Pilot Graveyard

AI tuberculosis detection worked beautifully in Kenya. Then the grant ran out and nobody could pay for the subscription.

The Brief

This article examines why AI health interventions in developing countries succeed as pilots but collapse when donor funding ends. Drawing from a 2026 Frontiers in Digital Health study of programs in Kenya, Rwanda, Brazil, and India, it explores the pattern researchers call 'pilotitis' and argues that scaling AI is less about proving algorithms work than building systems that can sustain them.

- What is pilotitis in AI healthcare?

- Pilotitis describes a pattern where AI health interventions in developing countries show strong results during donor-funded pilot phases but collapse when external funding ends. Hospitals lose access to software subscriptions, cloud storage, and trained staff, leaving proven technology unused. The term comes from a 2026 Frontiers in Digital Health study.

- Why do AI health pilots fail to scale in developing countries?

- According to research examining programs in Kenya, Rwanda, Brazil, and India, five barriers prevent scaling: fragmented digital ecosystems, dependence on donor funding, weak regulatory frameworks, low clinician trust, and inadequate infrastructure like unreliable electricity and internet. The technology works but the surrounding system cannot sustain it.

- Did AI tuberculosis detection work in Kenya and Rwanda?

- Yes. AI-based diagnostic imaging tools piloted in Kenya and Rwanda showed strong results for tuberculosis detection. However, when donor funding ended, hospitals could not afford recurring software subscriptions and data storage costs, and the programs shut down despite their proven effectiveness.

- What does it take to scale AI in healthcare systems?

- The Frontiers in Digital Health study proposes five pillars: policy alignment with national health strategies, infrastructure interoperability, sustainable financing beyond donor grants, workforce development and digital literacy, and trust-building through transparent AI that clinicians help design. The core insight is that scaling requires building health systems that can work with algorithms, not just proving algorithms work.

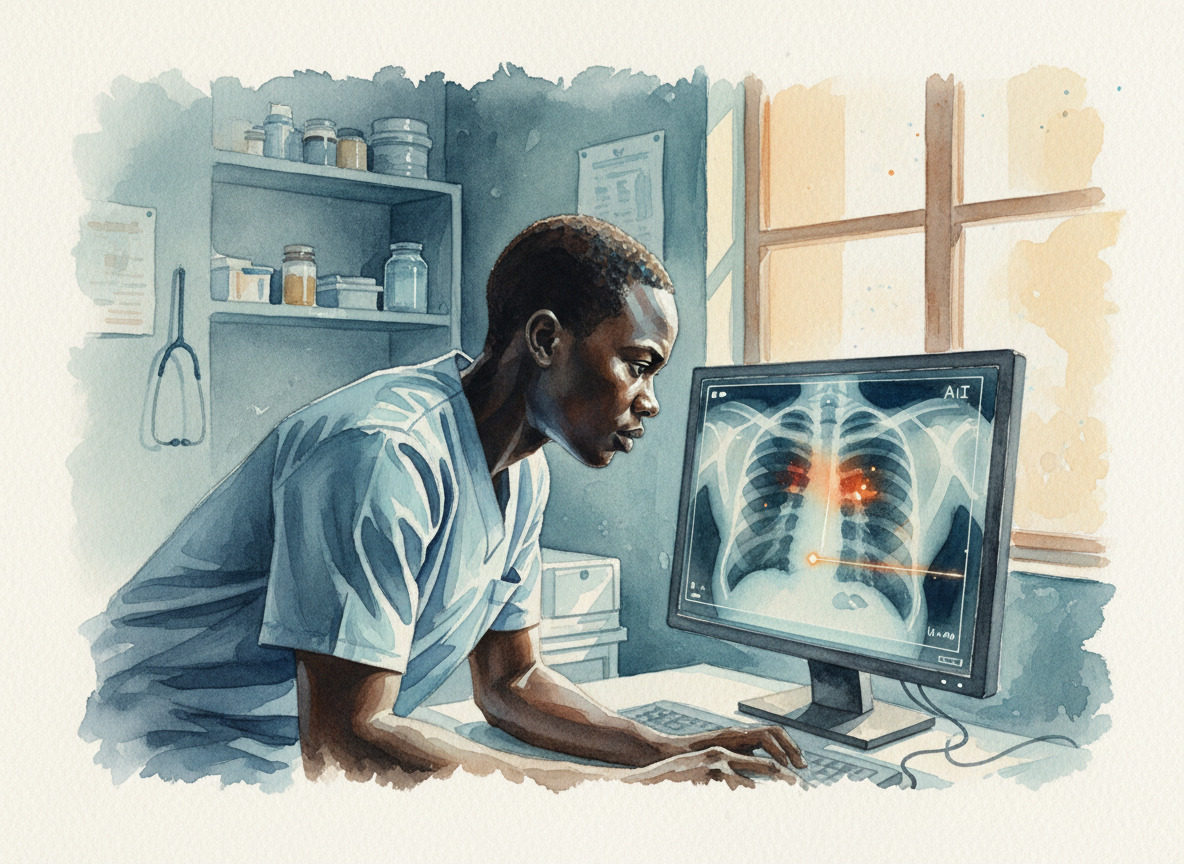

There's an AI system that can read a chest X-ray and spot tuberculosis in a clinic where the nearest radiologist is a four-hour drive. It was running in Kenya. It worked.1

I came across the story in a research paper from Frontiers in Digital Health. The paper wasn't celebrating the technology. It was trying to explain why it stopped. The researchers had a word for what happened, one I haven't been able to shake. "Pilotitis."1

And the pilots worked. That's the part that makes the rest of it so hard to read.

The algorithm worked. The system around it didn't.

The algorithm worked. The system around it didn't.

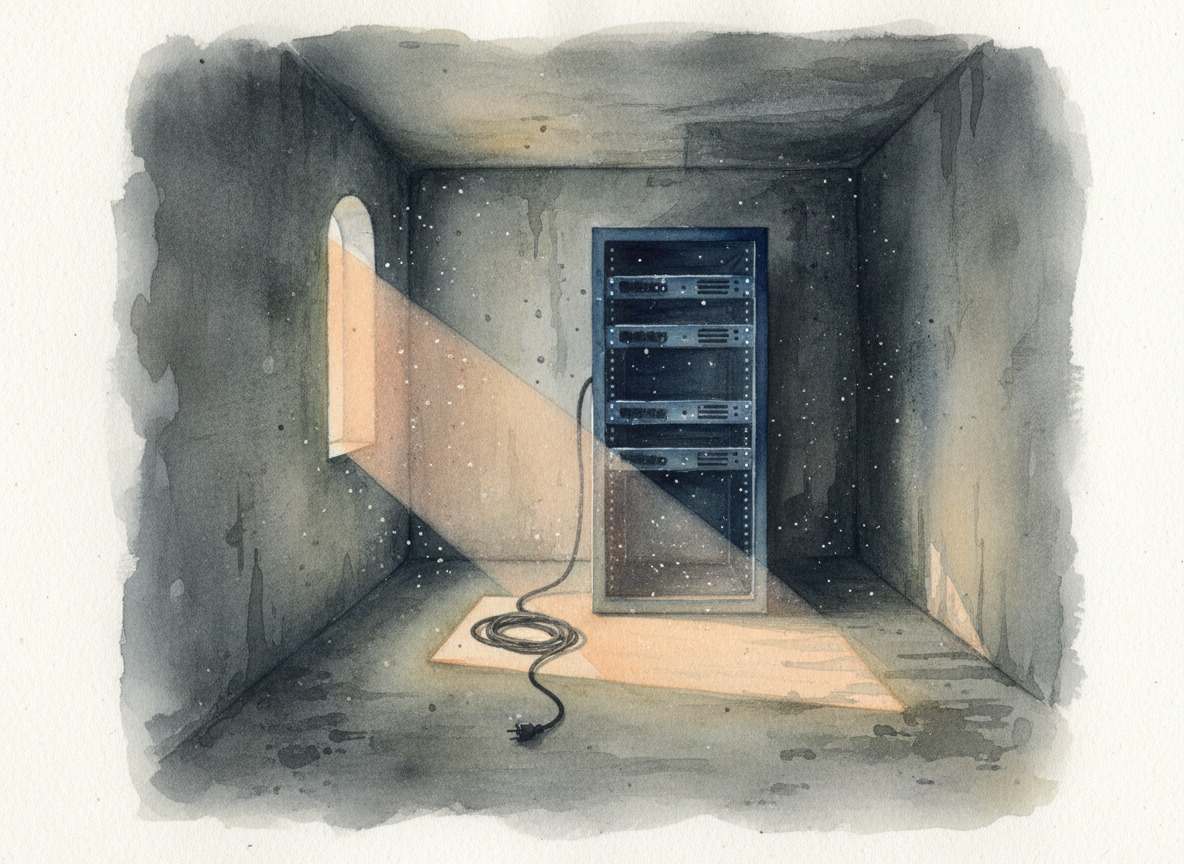

The TB diagnostic tools they piloted in Kenya and Rwanda? Strong clinical results. Accurate. Useful. The kind of outcome that makes a donor report shine.1 Then the grant period ended. The hospitals couldn't cover the recurring costs. Software subscriptions. Cloud storage. The trained staff who knew how to run the thing moved on to other projects. The servers went dark.

The researchers have a word for this cycle. Pilotitis. High pilot activity, low policy integration. The technology proves itself, and then it just... stops. Not because it failed. Because nobody budgeted for the part that comes after success.1

This got me thinking about something familiar. I've watched the same pattern play out in businesses here at home, just with different price tags. A team runs a proof of concept. It works beautifully in the demo. Everyone applauds. Then someone asks who's paying for the API calls next quarter, and the room goes quiet.

The Frontiers study went deeper than most. They looked at Brazil, India, Kenya, Rwanda, South Africa. Five countries, five different health systems, same outcome. In Brazil, getting municipal and federal health data to talk to each other required years of political alignment. India built a national digital health framework, but the AI pilots in radiology still ran in isolated silos. Sub-Saharan Africa had so many successful-then-abandoned projects that the researchers described the region as a pilot graveyard.1

Somewhere in East Africa, a server that could diagnose tuberculosis is collecting dust.

Somewhere in East Africa, a server that could diagnose tuberculosis is collecting dust.

Five barriers kept showing up across every country. Digital systems that can't talk to each other. Donor money that disappears when the grant ends. Regulatory frameworks that haven't figured out who's liable when an algorithm gets it wrong. Then there's the human side. Clinicians who don't trust a black box reading their patients' scans. And the basics, too. Reliable electricity. Stable internet. The stuff that's not exciting enough to fund.1

The WHO has been saying something similar for years. Their guidance on AI for health emphasizes the same structural gaps: poor data quality, unclear accountability when an algorithm gets it wrong, no plan for what happens after the pilot ends.2

But the line from the paper that stopped me cold was simpler than any of that. "Scaling AI in healthcare is less about proving algorithms work, and more about building health systems that can work with algorithms."1

That sentence flips the whole question. We keep asking whether AI is ready for the world. Maybe the better question is whether the world is ready for AI. Not the technology itself, but the plumbing underneath it. The budgets, the governance, the boring infrastructure that nobody writes grants for.

I keep thinking about a hospital administrator in Nairobi or Kigali who watched that TB detection system work. Saw what it could do for her patients. And then had to explain to them why it wasn't available anymore. Not because the technology failed. Because the subscription expired and nobody had planned for the electric bill.3

References

Footnotes

-

Frontiers in Digital Health. (2026). "From pilot to policy: why AI health interventions fail to scale in developing countries." Frontiers ↩ ↩2 ↩3 ↩4 ↩5 ↩6 ↩7

-

World Health Organization. (2024). "Regulatory considerations on artificial intelligence for health." WHO ↩

-

This detail is an editorial composite drawn from multiple cases described in the Frontiers study, where TB diagnostic tools in Kenya and Rwanda lost funding for software subscriptions and data storage. ↩

More to Explore

The Vacancy

136,000 tech workers laid off in 2026. Most contributed free work to open source in exchange for career capital. Everyone's covering the jobs. Nobody's asking what happens to the open source projects.

The Quiet

Eighteen days after Mythos shipped to forty-plus organizations, the loudest thing I've heard is my own article about it. That concerns me.

The Dress Rehearsal

Anthropic buried their Opus 4.7 strategy in a single sentence. The model they're not shipping explains the one they are.

Browse the Archive

Explore all articles by date, filter by category, or search for specific topics.

Open Field Journal