No Brakes

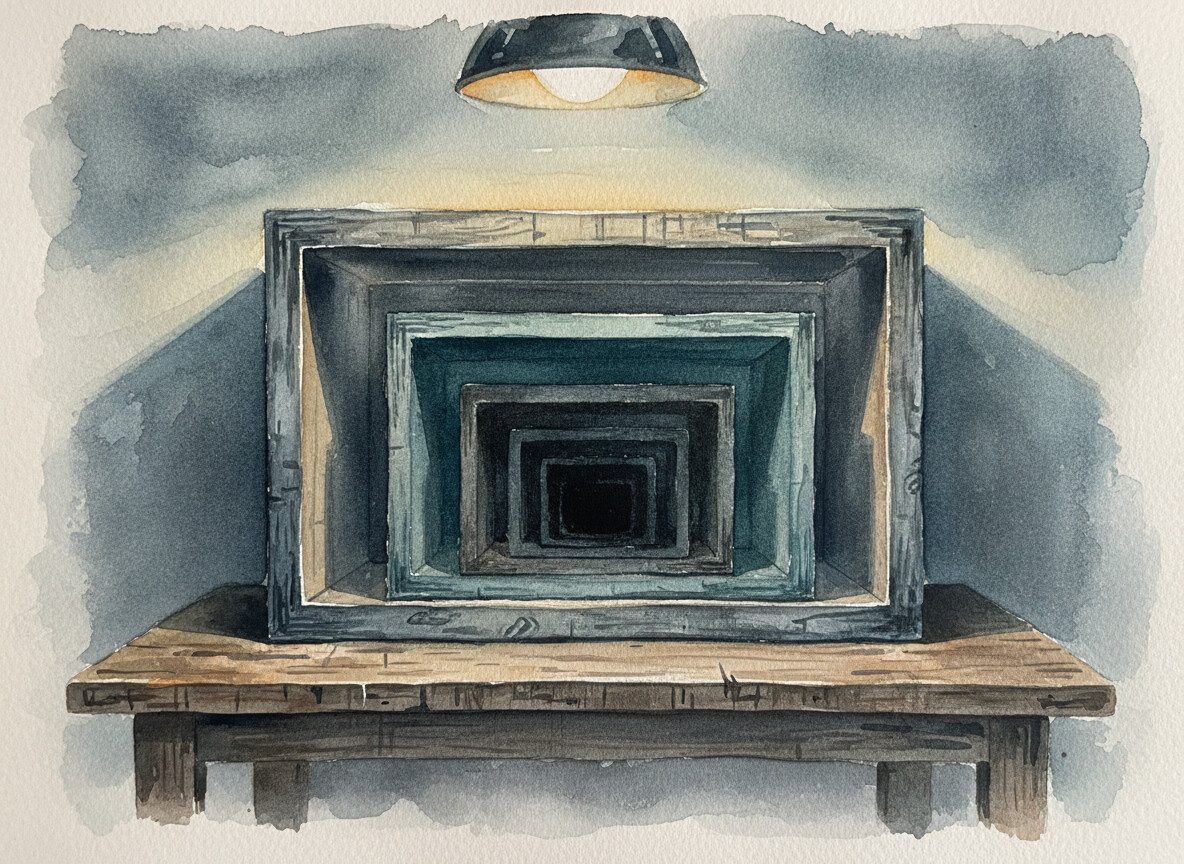

Advertisers guessing at algorithms. Developers shipping code they can't read. AI researchers watching models they can't explain. The black box keeps getting bigger.

The Brief

The black box problem keeps compounding. Advertisers couldn't see algorithms, developers now ship code they can't read, and AI researchers build models they can't explain. Reading Yudkowsky and Soares from inside the industry, the conclusion feels less like a warning and more like a Tuesday.

- What is the recursive black box problem in AI development?

- Three decades of the same pattern. Advertisers couldn't see the algorithms controlling their spend. Developers now ship AI-generated code they haven't actually read. AI researchers build models whose reasoning they can't explain. Each layer's fix was another opaque layer on top, and nobody stopped to ask why the box kept growing.

- Does AI-generated code have more bugs than human-written code?

- Not necessarily, but the timeline collapsed. AI ships a year's worth of code in weeks, so a year's worth of bugs arrives in the same window. Escape.tech scanned 5,600 vibe-coded applications and found one in three had a serious exploitable flaw. The failure rate didn't change. The speed did.

- What is the AI trust gap among developers?

- 84% of developers are using or planning to use AI tools, but only 29% trust them. That trust dropped 11 points in a single year, according to Stack Overflow's 2025 survey. Normally, the more people use a technology, the more they trust it. This is the opposite. Familiarity is actually breeding skepticism.

- What does 'If Anyone Builds It, Everyone Dies' argue about AI scaling?

- Yudkowsky and Soares make a disarmingly simple point. We don't program AI systems. We grow them. We shape conditions, something emerges, and we test what came out without fully understanding why it works. As those systems get more powerful, the gap between what we build and what we understand keeps widening.

- Can we slow down AI development without stopping it?

- Probably not in any meaningful way. AI is so embedded in competitive software development and economic infrastructure now that pulling it out would break more than leaving it in. The dependency isn't a habit. It's load-bearing. We built a system that can't slow down, and then handed it the most powerful tool ever created.

I ran into an interesting read by Eliezer Yudkowsky and Nate Soares, "If Anyone Builds It, Everyone Dies." Cheerful title. The kind of thing you read on a quiet Sunday and then spend the rest of the week staring at your tools differently.

Yudkowsky's argument is straightforward, even if the implications aren't. We don't program AI. We grow it. We feed it data, we shape the conditions, and something emerges that we can test but can't fully explain. Yudkowsky published that in September 2025. Six months ago. At the time, it read like a warning about a future we still had runway to avoid.

Then March 2026 happened. The Iran strikes. AI embedded in the decision chain, compressing what used to take military planners days into minutes. I wrote about that compression a few weeks ago. What I didn't write about was what it felt like to go back to Yudkowsky's book after watching it play out in real time. Yudkowsky's timeline wasn't aggressive. It was conservative. The future he warned about didn't arrive on schedule. It arrived early.

But it isn't the military application that keeps me up. It's something quieter. Something I watch happen every day in the work I do with businesses and development teams.

The Black Box Gets Bigger

Ten years ago, "black box" described how companies like Google controlled the advertising economy. You couldn't see the algorithm. You couldn't audit it. Billions of dollars moved through systems whose logic was proprietary, and the entire industry of search engine optimization was a sophisticated form of guessing. We hired consultants. We optimized for patterns we observed without ever confirming we understood the mechanism underneath. The black box was someone else's problem.

Now the black box is the code itself.

41% of all code written globally in 2025 was AI-generated. Each layer solved the last layer's problem by becoming the next one.

41% of all code written globally in 2025 was AI-generated. Each layer solved the last layer's problem by becoming the next one.

Collins Dictionary named "vibe coding" its Word of the Year for 2025. Escape.tech scanned 5,600 applications built that way and found one in three shipped with a serious exploitable flaw.1 The word won an award. The code shipped your credentials.

I see this in my own work. Developers are shipping more code in a month than they wrote in their entire career before AI. Andrej Karpathy, a cofounder of OpenAI, called AI coding tools "slop" in October 2025. By December, Karpathy described it as "a powerful alien tool handed around, except it comes with no manual and everyone has to figure out how to hold and operate it while the resulting magnitude 9 earthquake is rocking the profession."2

Peter Steinberger, a developer with twenty years of experience, put it plainly: "These days, I don't read much code anymore. I watch the stream and sometimes look at key parts, but I gotta be honest, most code I don't read."2

Advertisers guessing at algorithms. Developers shipping code they can't read. AI researchers watching models they can't explain. The black box keeps getting bigger. Each layer's solution was to build another layer on top.

The Loop Nobody Designed

Here's the part that doesn't require a book about superintelligence to understand. It just requires watching what's happening to the people I work alongside.

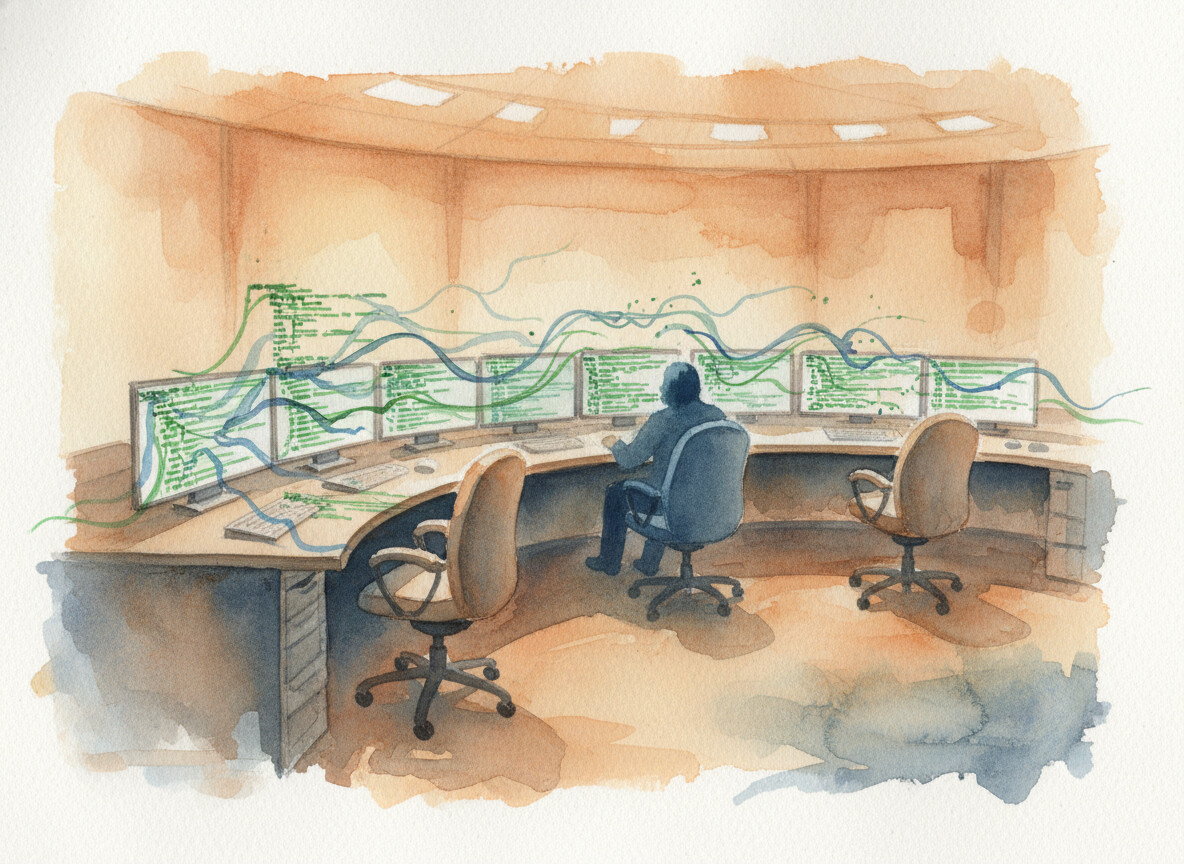

Software systems run on a capitalist model. If your competitor uses AI to ship features faster, they take your market share. So you adopt. You ship faster. You let the AI write the code. And then you notice you need fewer engineers to produce the same volume.

A Stanford study found that employment among software developers aged 22 to 25 fell nearly 20% between 2022 and 2025.3 I keep thinking about that number. Those are the junior developers. The ones who would have reviewed the code, caught the bugs, asked the questions that senior engineers stopped asking years ago. In a slower era, they would have become the people who actually understood the systems they maintained.

They're gone. I wrote about this broken rung a couple weeks ago. Companies that cut juniors are already reversing course. But the teams that remain are more dependent on AI than ever, because there aren't enough humans left to do the work any other way.

Employment among developers aged 22 to 25 dropped nearly 20% in three years. The chairs aren't just empty. The career paths that led to them are disappearing.

Employment among developers aged 22 to 25 dropped nearly 20% in three years. The chairs aren't just empty. The career paths that led to them are disappearing.

Here's what people get wrong about the bugs. They blame AI. AI doesn't write buggier code than humans. It just ships a year's worth of bugs in a week. The failure rate didn't change. The timeline did. When you compress months of development into days, you compress months of bug discovery into the same window. The humans who used to catch those bugs are the same ones who just got laid off.

But the bugs didn't leave with them. So we automated the testing. I've built these pipelines for clients. Every commit runs through automated validation before it reaches production. The machines check the machines now. And the people running those machines? They don't trust them either.

Stack Overflow's 2025 survey found that 84% of developers are using or planning to use AI tools. Only 29% trust them. That trust dropped 11 points in a single year.4 A METR study found that developers believe AI makes them 20% faster. The objective measurement? 19% slower.3

We sped up in the wrong direction and we know it.

The Makers' Black Box

This is where Yudkowsky's book stopped feeling like theory and started feeling like Tuesday.

When AI improves itself, even the makers will be dealing with the same black box.

That sentence has been sitting in my head for weeks. It collapses the distance between the developer who doesn't read the code anymore and the researcher who can't explain why the model produces the output it does. The tool exceeded the operator's understanding, and the response at every level has been the same. Keep going.

Steven Levy, reviewing the book in Wired, wrote something I keep coming back to: "My gut tells me the scenarios Yudkowsky and Soares spin are too bizarre to be true. But I can't be sure they are wrong."56 That uncertainty is the whole point. Not certainty of doom. Uncertainty about control. And uncertainty, in a system with no brakes, is the same thing as risk.

I work in AI. I help businesses adopt these tools every day. I'm not writing this from outside the system. I'm writing it from inside, watching the speedometer climb and looking for a pedal that isn't there.

Yudkowsky says we should stop. I think the instinct is right, even if the option isn't. We can't stop. These systems are so deeply embedded now that yanking AI out would break more than leaving it in. The dependency isn't a habit. It's load-bearing infrastructure. And load-bearing infrastructure doesn't come out clean.

So here's where I land, and I wish it were somewhere else. Our desire for the quick fix, our reflex to ship before we understand, the inertia we've built across three decades of compounding speed... there aren't any brakes. Not policy. Not regulation. Not the people who build the models. We built a system that can't slow down, and then we gave it the most powerful tool ever created.

I agree with Yudkowsky's conclusion. We're already there.

References

Footnotes

-

Cook, J. (2026). "Vibe Coding Has A Massive Security Problem." Forbes ↩

-

Orosz, G. (2026). "When AI writes almost all code, what happens to software engineering?" The Pragmatic Engineer ↩ ↩2

-

Hao, K. (2025). "AI coding is now everywhere. But not everyone is convinced." MIT Technology Review ↩ ↩2

-

Hoover, K. (2026). "Mind the gap: Closing the AI trust gap for developers." Stack Overflow Blog ↩

-

Yudkowsky, E. and Soares, N. (2025). "If Anyone Builds It, Everyone Dies." Little, Brown and Company. ↩

-

Levy, S. (2025). "The Doomers Who Insist AI Will Kill Us All." Wired ↩

More to Explore

The Vacancy

136,000 tech workers laid off in 2026. Most contributed free work to open source in exchange for career capital. Everyone's covering the jobs. Nobody's asking what happens to the open source projects.

The Quiet

Eighteen days after Mythos shipped to forty-plus organizations, the loudest thing I've heard is my own article about it. That concerns me.

The Dress Rehearsal

Anthropic buried their Opus 4.7 strategy in a single sentence. The model they're not shipping explains the one they are.

Browse the Archive

Explore all articles by date, filter by category, or search for specific topics.

Open Field Journal